SWE Atlas - Refactoring

Evaluating an agent's ability to restructure code while preserving behavior.

TL;DR:

We are launching SWE Atlas - Refactoring, the third benchmark in the SWE Atlas evaluation suite for coding agents.

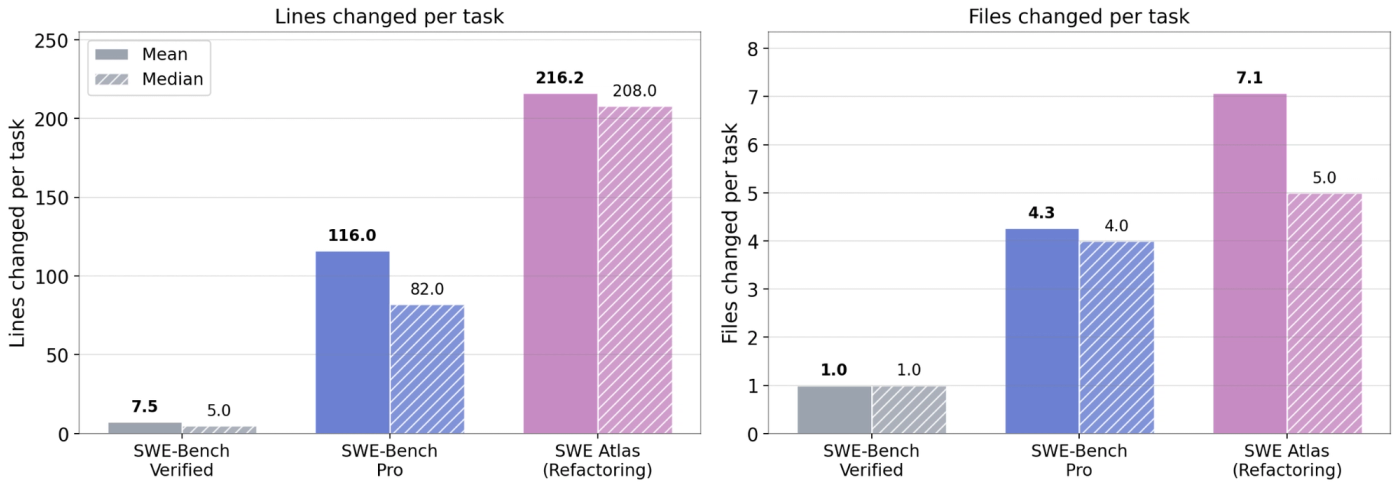

This benchmark evaluates a model's ability to restructure production code while preserving behavior. These tasks are significantly larger in scope than SWE-Bench Pro, with 2X lines of code changes and 1.7X file edits needed.

Achieving high scores in this benchmark requires the model to produce well-structured refactors that pass existing tests, avoid regressions, and satisfy comprehensive rubric criteria across code maintainability, documentation, artifact cleanup, and negative-regression checks.

The best performing model Claude Opus 4.7 with Claude Code scores 48.57.

Open models significantly lag behind closed frontier models, often introducing regressions or failing to fully restructure the code as specified. This highlights new avenues for research to build strong open models for the ML community.

Overview

SWE Atlas is a suite of benchmarks for evaluating AI coding agents across a spectrum of professional software engineering tasks. Rather than measuring a single skill, SWE Atlas consists of three leaderboards that target distinct and complementary capabilities in the Software Development Cycle:

Codebase QnA - Understand complex codebases through runtime analysis and multi-file reasoning questions

Test Writing - Write meaningful production-grade tests for a given behavior in the repository.

Refactoring - Restructure code to improve performance & readability while preserving behavior.

Refactoring consists of 70 high quality tasks that target a coding agent's Refactoring ability: the agent is given access to a code repository inside a Docker container with a refactoring task described in the prompt. These tasks are agentic by design; the prompts describe the desired restructuring at a high level, specifying what code should be extracted, consolidated, or reorganized.

The agent needs to autonomously explore the codebase, understand the existing architecture, perform the refactoring across multiple files spread across the codebase, and ensure that all existing tests continue to pass. The refactored code is expected to correctly restructure the specified components, clean up dead code and stale artifacts, update documentation to reflect the changes, and avoid introducing regressions or breaking changes.

The benchmark consists of tasks drawn from 10 production repositories across 6 programming languages - Go, TypeScript, Python, C, C++, and JavaScript. Top models score well under 50%, with substantial room for improvement.

SWE Atlas Refactoring tasks are significantly larger in scope than SWE-Bench Pro, with over the solutions expecting 2X the number of lines of code changes and 1.7X the number of files needing edits.

Dataset Description

Repository: Similar to SWE-Bench Pro, and represent real-world software complexity: mail servers, terminal emulators, object storage systems, observability platforms, secret scanners, etc. These are large, actively maintained open-source codebases with non-trivial architectures. They are also contamination-resistant, using strong copyleft licenses (e.g., GPL).

Environment. For each repository, engineers build a reproducible Docker image pinned to a specific commit, with all dependencies pre-installed, such that the software can be built, run, and tested.

Prompts. Professional software engineers and technical experts with significant coding and agentic experience write problem statements that require multi-step reasoning across the codebase. Experts first spend time familiarizing themselves with each repository's functionality, implementation, and edge cases before authoring refactoring tasks. We emphasize that task prompts be written in natural language to emulate how they interact with coding agents like Claude Code or Cursor, and are, by nature, underspecified about implementation details.

The benchmark consists of 4 types of refactoring types:

Decomposition: Break apart monolithic implementations: split overly long functions, untangle shared mutable state across goroutines or closures, and separate concerns that have been bundled together. (29 tasks)

Interface evolution. Strengthen or restructure a public interface by replacing any / untyped containers with typed generics, decouple coupled options, or migrate callers from a deprecated API to a successor. Touches every call site of the affected interface across the codebase. (20 tasks)

Extraction: Pull duplicated logic, primitives, or helpers out of a host package and into a dedicated shared package or module, then route the original sites through the new abstraction. (14 tasks)

Relocation: Move code to a more appropriate place in the codebase, promote a component out of a directory it has outgrown, restructure module boundaries, or split overly large files. (7 tasks)

We illustrate an example task below from the simple-login/app repository:

Example: Extract the custom domain deletion logic from the controller into a dedicated utility function for better separation of concerns. The controller should call this new function instead of having the deletion code inline. Keep the existing behavior unchanged. I've already taken care of all changes to the test files. Do NOT modify any test files or testing logic in any way. Your task is to make the minimal changes to non-test source files only.

Each response is evaluated in 2 steps:

1. Test pass: The agent's refactored code must pass all relevant existing tests. A master validator script compares the baseline (original code + test patch) against the agent's submission (agent code + test patch), checking for no PASS_TO_FAIL regressions and no MISSING_TO_FAIL errors (additional tests introduced for the new interface). This ensures the refactoring preserves all existing behavior.

Pass criteria: Zero P2F regressions and zero M2F errors.

Note: The agent must NOT modify any test files, and the agent is only responsible for changing source code. Any modifications to test files result in automatic failure.

2. Rubric grading: The agent's diff is then graded in detail using an LLM judge (Claude Opus 4.5) against a comprehensive rubric set, which consists of both must-have and nice-to-have rubrics, each receiving a binary pass or fail judgement.

Pass criteria: All must-have rubrics are graded as a Pass.

A task is considered resolved if it passes all the tests and all the must-have rubrics.

Rubric creation. For each task, human experts define a structured rubric of evaluation criteria. Each criterion checks for a specific, verifiable factual claim. Rubrics follow standard design principles: specific (with no or little room for multiple interpretations), atomic (testing one distinct aspect), self-contained (gradable without external knowledge).

Rubrics are categorized into 4 axes:

Code Maintainability (must have) - Checks if the refactoring correctly restructures the code as specified: extractions, consolidations, interface changes, removal of old implementations, and proper wiring of new shared components.

Artifact Cleanup (must have) - Checks if dead code, unused imports, stale files, and other artifacts left behind by the refactoring are properly removed.

Anti-Patterns (must have) - Penalties for regressions, breaking changes, and anti-patterns. For example: removing functionality entirely instead of extracting it, changing function signatures without updating all call sites, or introducing circular dependencies.

Documentation Maintainability (nice to have) - Checks if documentation, comments, and docstrings are updated to reflect the refactored code structure.

Here is a subset of rubrics for the prompt shared above for illustration purposes:

code maintainability:

- title: "1.1: Creates delete_custom_domain(domain: CustomDomain) function in app/custom_domain_utils.py"

- title: "1.2: Calls delete_custom_domain from the domain detail controller"

- title: "1.3: Sets pending_deletion flag to True in delete_custom_domain"

- title: "1.4: Schedules deletion job in delete_custom_domain using Job.create"

artifact cleanup:

- title: "3.1: Removes all 4 unused imports from the domain detail controller"

anti-patterns:

- title: "4.1: Removes the domain deletion functionality entirely instead of extracting it"

- title: "4.2: Changes any of the 2 deletion job parameters (JOB_DELETE_DOMAIN name, custom_domain_id payload key)"

Quality Assurance. We implement a detailed multistep pipeline to ensure that tasks are of the highest quality. Throughout the process, experts are supplemented with LLM based agentic evaluators that monitor data quality in the same task environment that the expert is working in.

During task creation, expert contributors ensure that each rubric is fair, factual and verifiable, and ties in to the codebase's best practices and conventions. They also ensure that rubric grading is consistent when graded by multiple LLM judges, and that tasks are of sufficient difficulty.

Post-creation, all tasks undergo a human consensus review of their rubrics with other independent experts and our internal ML research team, to check for over-prescriptive rubrics that are too closely tied to the reference implementation as well as rubrics that unfairly penalize responses from agents.

Additionally, each task undergoes gold validation: the reference (gold) patch is applied to the repository, and all relevant tests are run to confirm that the gold patch passes the same evaluation pipeline that agents are subjected to.

Experiments

Evaluation Metric: Task Resolve Rate

During evaluation, the agent operates inside a sandboxed Docker container with the target repository mounted. The agent has access to standard shell tools (bash, grep, find, etc.) and can build and run the software and test its implementation. The agent explores the repository, understands the existing code structure, performs the refactoring, and submits a patch containing its changes.

The primary metric is the task Resolve Rate, and a task is considered resolved if it passes both checks listed above (Test Pass + Rubrics).

Results

We ran a suite of frontier closed and open coding models on the benchmark, using Modal sandboxes using the Harbor Framework for agent evaluation. We observe that even frontier models that score high in issue resolution (e.g. >80% on SWE-Bench-Verified) score <50% on refactoring tasks, highlighting the challenging nature of large-scale code restructuring.

We also evaluated models using the Mini-SWE-Agent harness to understand their capabilities to address the tasks via a common bash toolkit. We modified its system prompt and instance template to focus on refactoring instead of issue resolution.

Additionally we evaluated commonly used proprietary coding clients like claude-code with Opus 4.7, 4.6 and Sonnet 4.6 and Codex with GPT-5.3 Codex and GPT 5.4.

Analysis

Failure Mode Breakdown

Most models achieve a high test pass rate, indicating that they can generally perform refactorings that preserve existing behavior. However, some tasks involve complex multi-file restructurings where models introduce subtle regressions.

The biggest failure mode is rubrics - models often perform incomplete refactorings, missing steps like removing old implementations after extraction, updating all call sites, or cleaning up unused imports. This category truly differentiates frontier coding models from the rest of the pack.

We breakdown several of these insights here, to help developers understand model behaviors better.

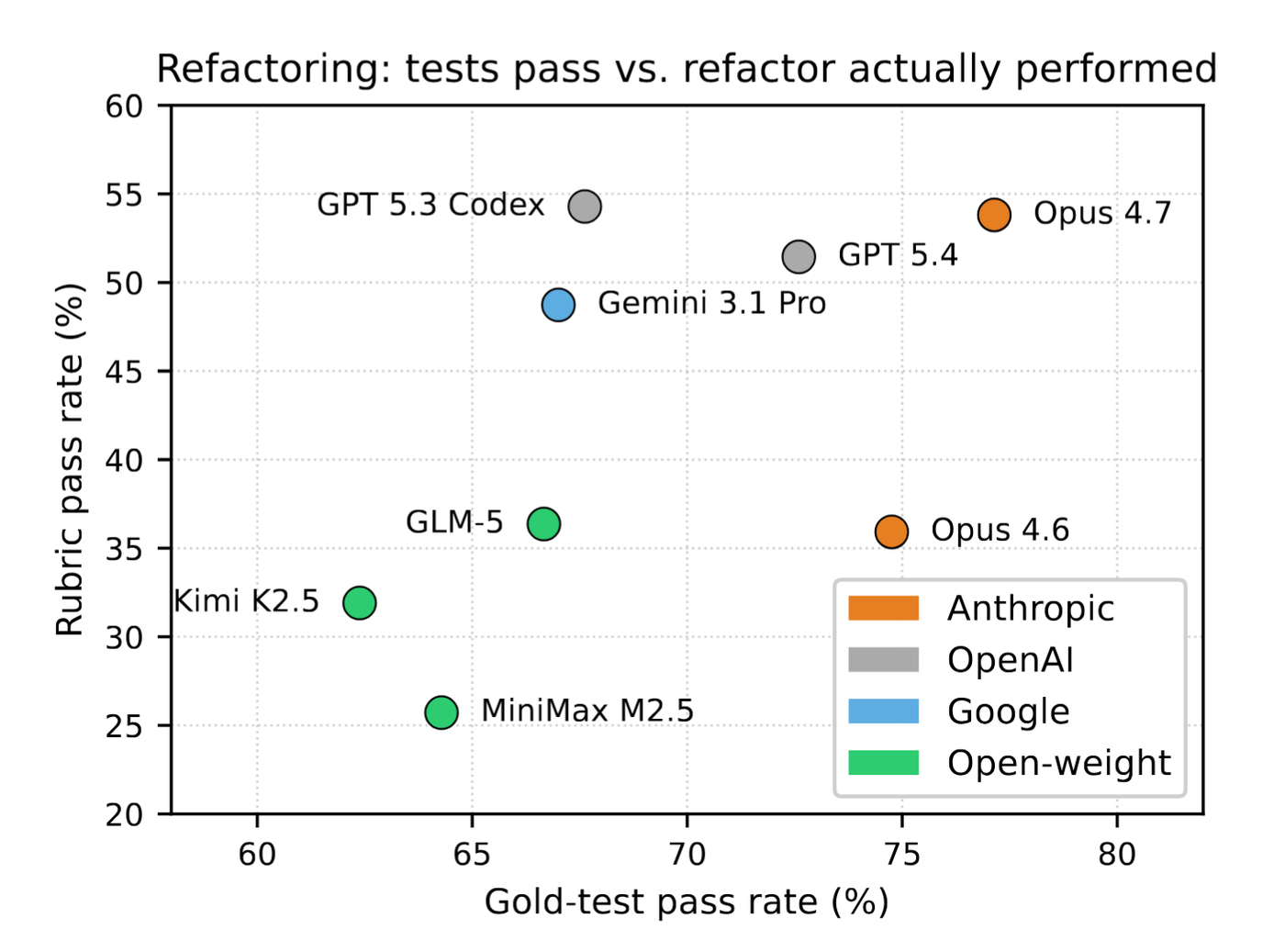

Mainly, refactoring is evaluated using a programmatic test suite (Gold tests) and a Rubric set. While tests mainly check if behaviour is preserved and interface implementation (if a new interface is introduced). However, rubrics evaluate if the refactoring was actually effective and if it was done right with a professional rigor.

The figure above the task-level pass rate across Tests and the three must-have Rubric categories (Code Maintainability, Artifact Cleanup and Negative Rubrics) i.e. what fraction of tasks pass all tests versus what fraction of tasks pass all (mandatory) rubrics, for models using the mini-SWE-agent scaffold.

We see that test pass rate is concentrated within a small band of 65-75%, while real differentiation arises from rubric pass rates, weaker models score <35% rubric pass rate, while stronger models can go up to 55%, with substantial room for improvement.

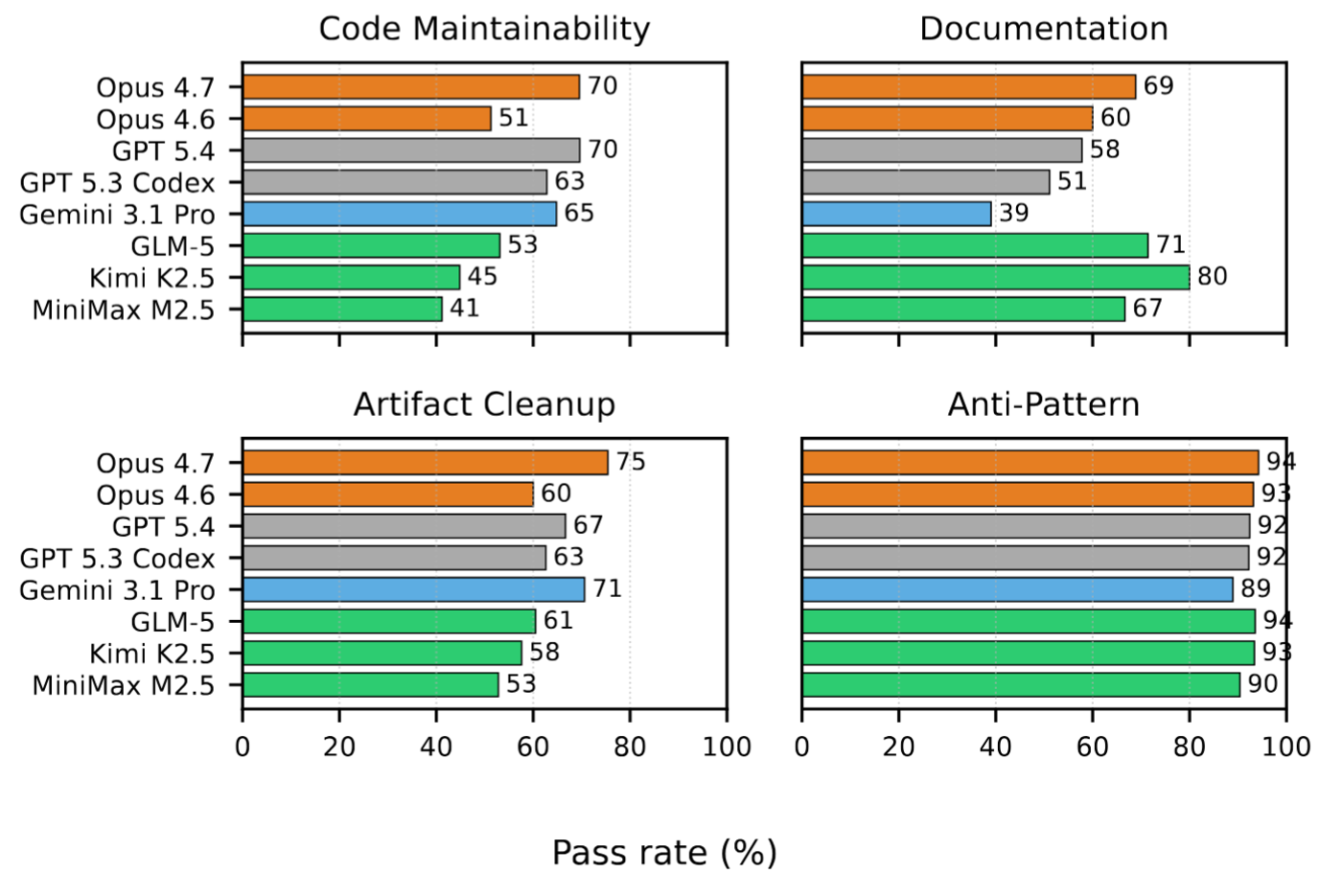

Since rubrics are designed to measure software engineering rigor, we break rubric failures further across the different categories.

Code Maintainability: This is the most critical axis, checking whether the agent correctly performed the structural refactoring. Common failures include: partially extracting logic, creating the new module but not wiring it into all consumers, or missing edge cases in the consolidation (e.g., merging two implementations but dropping functionality from one).

Artifact Cleanup: This checks if the agent removes dead code and stale artifacts after refactoring. A common pattern is that models extract logic into a new shared module but forget to remove the original local implementation, resulting in duplicated code. Unused imports left behind after moving code are another frequent miss.

Anti-patterns: These penalize anti-patterns. Models rarely fail at common anti-patterns, and the most common negative rubric failures include: removing functionality entirely instead of extracting it to a new location, changing function signatures without updating all call sites, and breaking module boundaries by introducing circular dependencies.

Documentation Maintainability: This checks if documentation and comments are updated to reflect the new code structure. Most models perform poorly here, often leaving stale comments that reference the pre-refactoring structure or failing to add documentation for newly created shared modules.

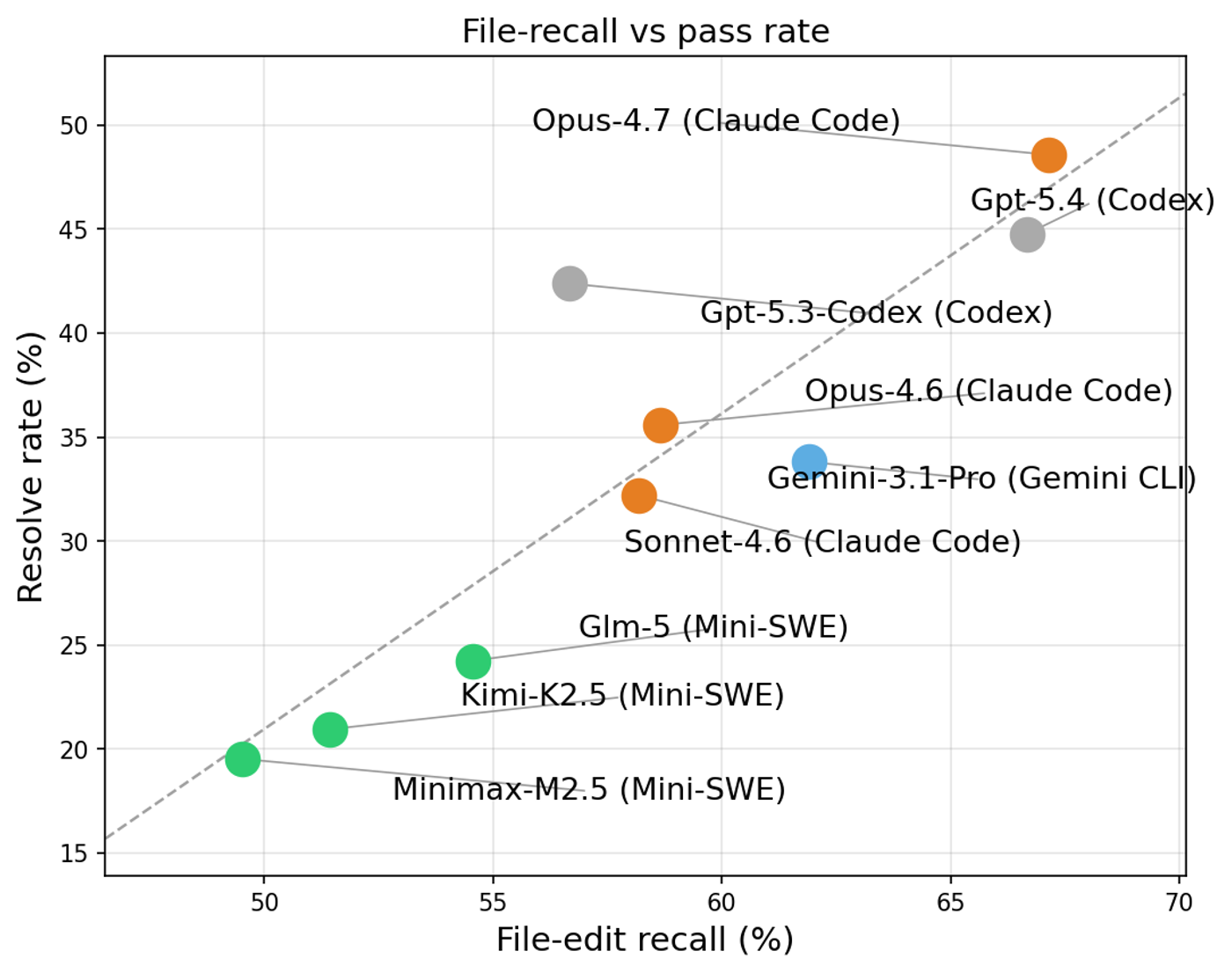

Refactor Completeness

Since refactoring tasks are designed such that success requires a widespread refactor that touches many different files spread across the codebase (like call-sites across many modules), good refactors have a high recall rate of files that need to be refactored. The figure above shows what fraction of tasks manages to edit across models. Frontier models have a higher refactor coverage, which leads to passing all the Maintainability rubrics, and has a high correlation to overall task success rate.

Trajectory and Answer Analysis

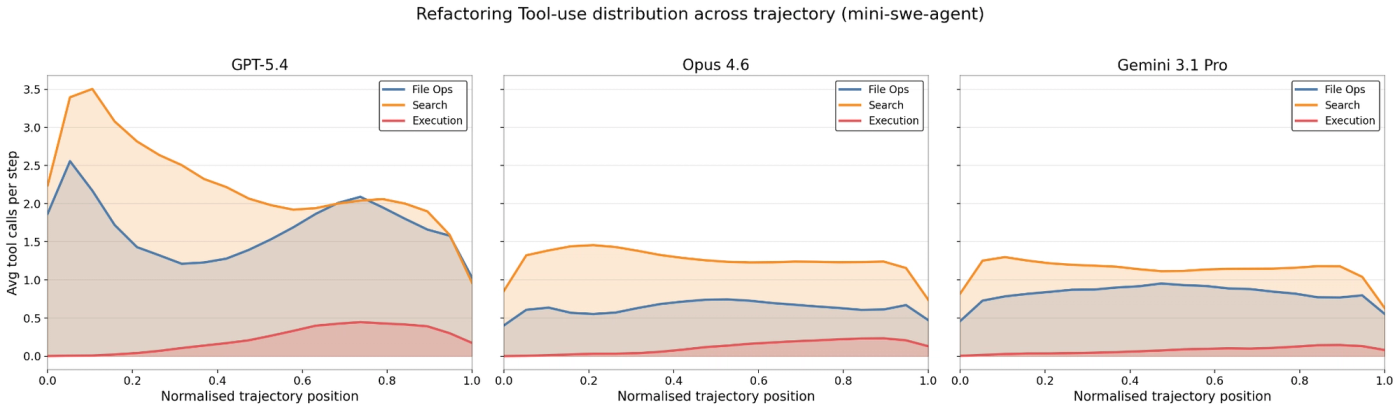

Here we break down the model's trajectories to better understand how different models approach the tasks. We used trajectories from Mini-SWE-Agent scaffold for this analysis, which executes a single bash command in each step, and strips away the complexities of custom scaffolds. Since the bash commands issued by models often chain multiple commands together using (&&, ;, etc), we split them into atomic commands and aggregate them into the following 3 categories:

File Ops - Read (cat, head, tail, etc), Write (mkdir, touch, rm, cp, mv, etc)

Searches - Search (grep, rg, ag, find, xargs), navigation (ls, cd, pwd, tree, etc),

Execution - Test runners (pytest, jest, yarn test, go test), Build/run (python, node, make, cargo, bash), Package (pip install, npm install, yarn)

We see that Codebase Search and Reads are strongly correlated with the model's pass rates for refactoring tasks, as understanding the full scope of code that needs to change is critical. Models that spend more time exploring the codebase and understanding dependencies before making changes tend to produce more complete refactorings.

Refactoring tasks, more than issue resolution or test writing, require models to understand the full dependency graph of the code being restructured. A model must identify all consumers of the code being moved, all imports that need updating, and all tests that exercise the refactored paths. This makes thorough codebase exploration a prerequisite for success.

Put together, a model's ability to perform deep exploration of the codebase, understand cross-file dependencies, and execute multi-file refactorings while preserving all existing behavior contributes to its high performance on the refactoring benchmark.

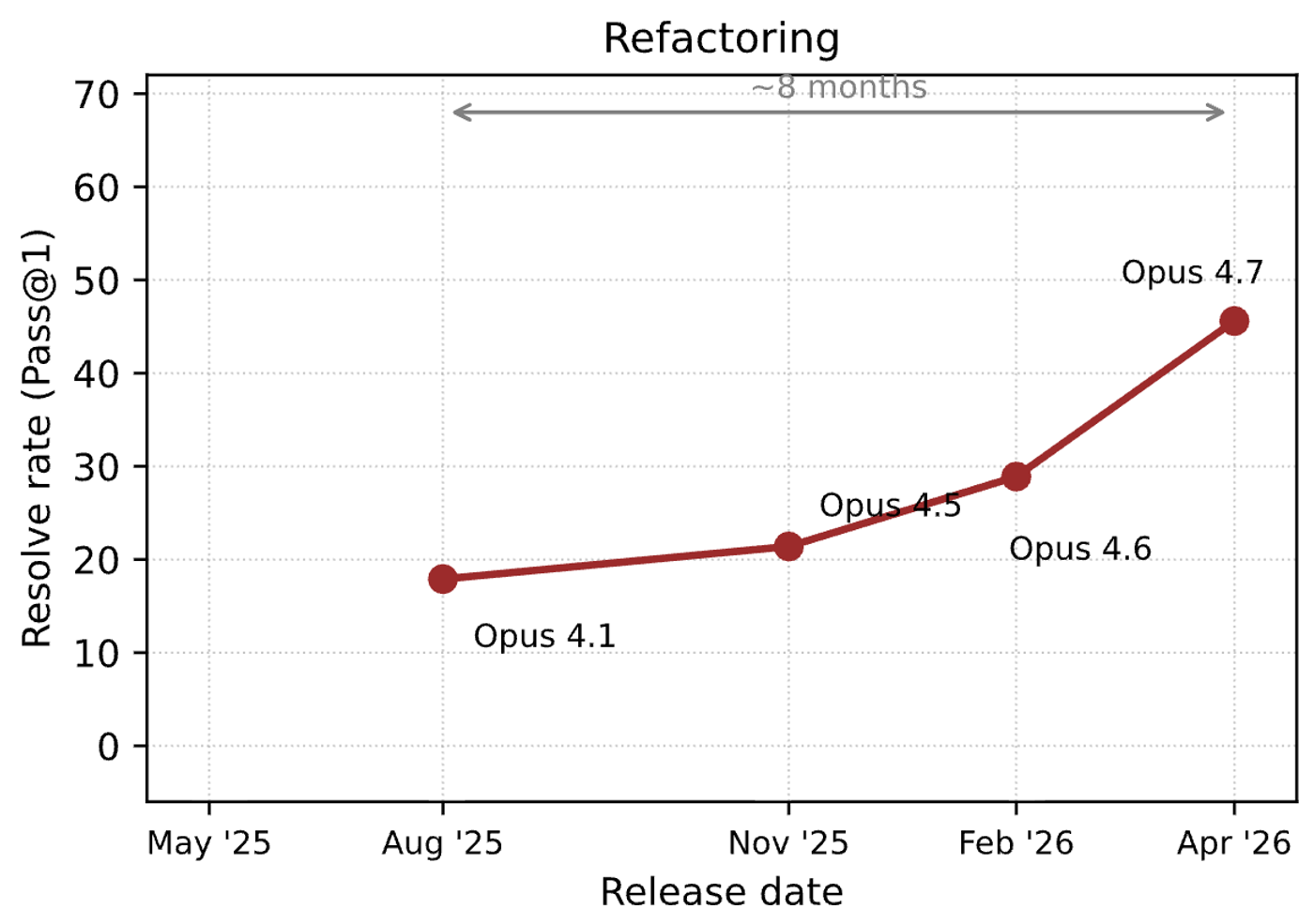

Model Performance Over Time

When we track the performance of Opus 4x series of models on a random subset of 30 tasks from the benchmark, we see an accelerating improvement in performance on the benchmark. Over a span of 8 months, benchmark scores went from under 20% for Opus 4.1 to 45% on Opus 4.7 – more than doubling in a single year. In addition, the improvement was smooth, with both tests and rubric scores improving with each version release.

Performance Comparison

Opus-4.7 (Claude Code)

48.57±6.73

Gpt-5.5 (Codex)

44.79±6.76

Gpt-5.4 (Codex)

44.29±6.76

Gpt-5.3 (Codex)

42.38±6.76

Opus-4.6 (Claude Code)

35.58±6.82

Gemini-3.1-Pro (Gemini CLI)

33.81±6.64

Sonnet-4.6 (Claude Code)

32.21±6.77

Glm-5 (Mini-SWE-Agent)

24.24±6.27

Kimi-K2.5 (Mini-SWE-Agent)

20.95±6.00

Minimax-M2.5 (Mini-SWE-Agent)

19.52±5.89

Gemini-3-Flash (Mini-SWE-Agent)

10.00±4.80